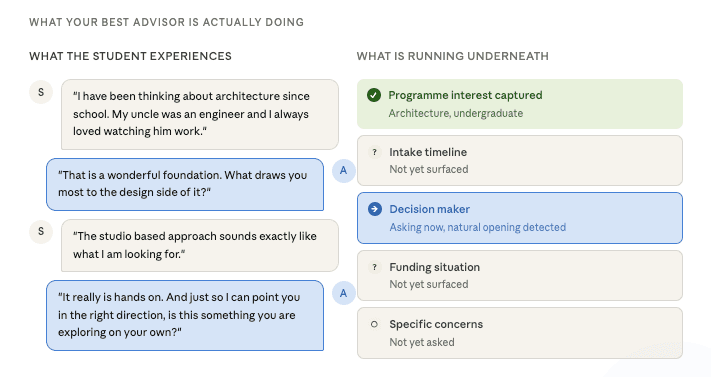

Every admissions team has that one advisor. The one who remembers what a student said months ago in a 2-minute conversation. The one who never asks the same question twice. The one who knows instinctively when to push forward and when to just listen. The one who always surfaces the right question at the right moment and makes the student feel genuinely heard in the process.

That advisor is not following a script. They are running a mental checklist the entire time, updating it invisibly with every exchange. Do I know what programme they are interested in? Do I understand their timeline? Have I picked up signals about whether this is a family decision or their own? Is there a funding conversation we still need to have?

They never interrupt a student who is mid-thought. They never ask something the student already answered in their enquiry form. And when they do ask a qualifying question, it never feels like a qualifying question. It feels like a natural part of a conversation between two people who are genuinely connecting.

That is the standard we believe AI should be held to when it enters an admissions conversation. Not a lower bar because it is a machine. The same bar, because the student on the other end does not care who is running the conversation. They only care how it makes them feel.

The question is whether the technology being deployed in your institution is actually built to that standard. And in most cases, we have found that it is not.

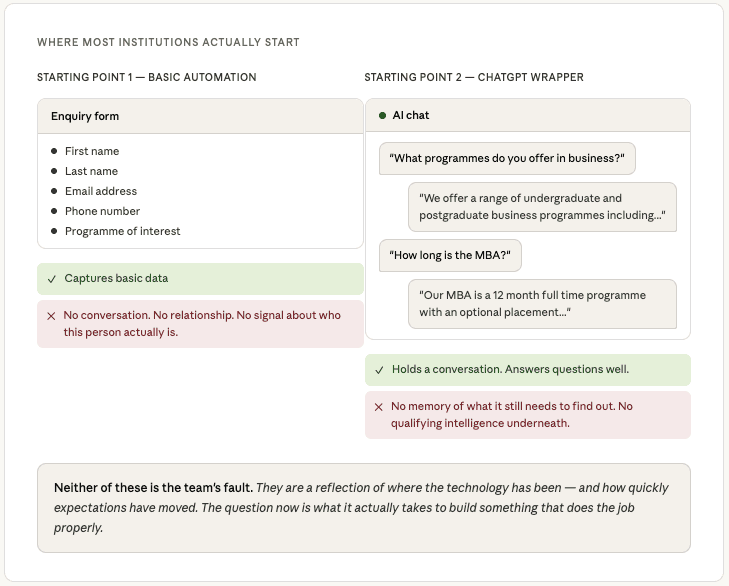

Where most institutions actually start

Most admissions teams we work with are not starting from a sophisticated AI qualification system. They are starting from one of two places.

The first is a basic automation, something that collects a name, an email address, a phone number, and maybe a programme of interest. It is functional. It captures data. But it is not a conversation. The student knows they are filling out a form, and they engage with it accordingly. There is no relationship being built, no trust being established, and no signal being picked up about who this person actually is and whether they are the right fit.

The second is a ChatGPT-style wrapper, a language model that can hold a conversation but has not been built with any qualifying intelligence underneath it. It can answer questions about programmes. It can sound friendly and helpful. But it has no memory of what it still needs to find out, no sense of whether this is the right moment to ask, and no structured understanding of what a qualified prospect actually looks like for that institution. It talks well. It qualifies poorly.

Building something that actually complements your team

The reason we care about this distinction is not technical. It is about what admissions teams actually need from AI.

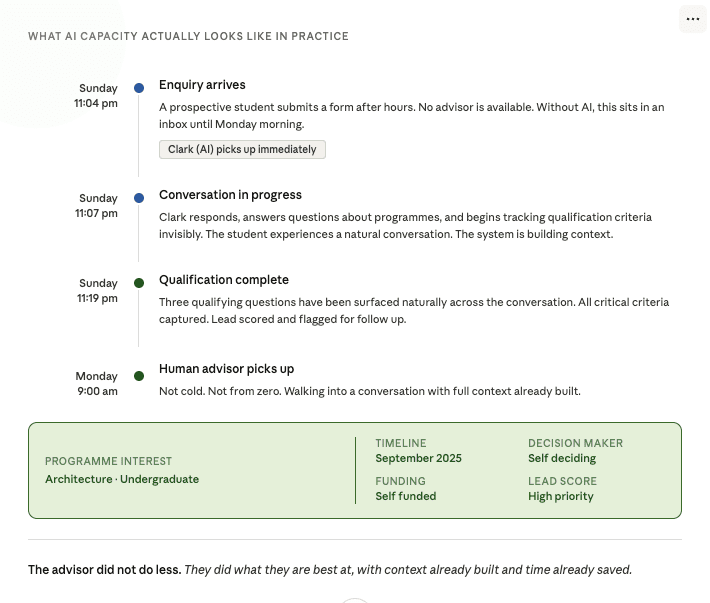

The goal is not to replace your advisors. It is to give them more hours in the day. To handle the volume of early-stage enquiries that currently fall through the cracks because there are not enough people to respond at 11 pm on a Sunday, or during peak application season, when the inbox is overwhelming. To qualify prospects intelligently so that when a human advisor does pick up the conversation, they are walking into it with context, not starting from zero.

For that to work, the AI needs to behave in a way that reflects well on your institution. It needs to ask the right questions at the right moments. It needs to remember what has already been said. It needs to know when to push and when to listen. In other words, it needs to do what your best advisor does, just at a scale and availability that no human team can match alone.

That requires a specific kind of architecture. Not a prompt. Not a wrapper. A system with memory, routing intelligence, and planning built into its foundations.

What we tried, what we learned, and what we are building instead

Getting to the architecture we use today required working through approaches that did not fully hold up. That process taught us more than the solutions themselves, so it is worth sharing the thinking.

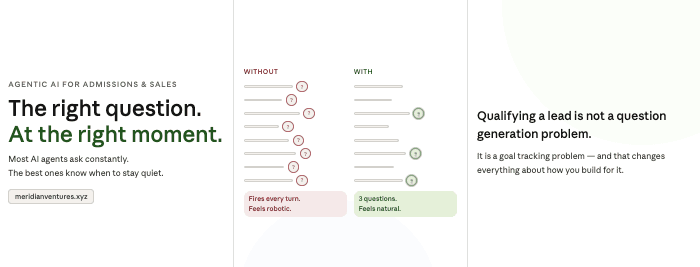

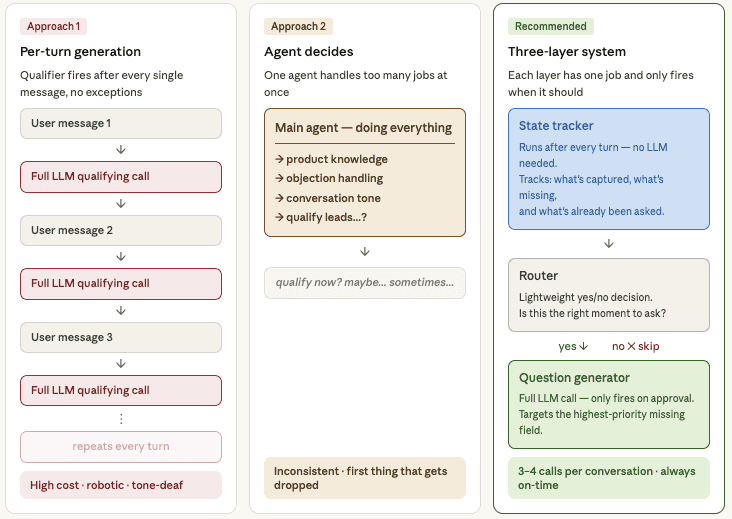

The first approach we explored was running a dedicated qualifying question generator after every single user message. The appeal was "control". You could guarantee the question got asked. But in practice, it produced something that felt like an interrogation. The system was mechanically inserting a qualifying question, whether the moment called for it or not, regardless of what the student had just said or how they had said it. It was expensive, it added latency, and it damaged the very thing it was supposed to support: a conversation that felt human.

The second approach was giving the main agent a tool it could call when it judged the moment was right. This felt more elegant. The problem is that you are asking one agent to simultaneously manage product knowledge, handle objections, maintain conversational tone, and make strategic decisions about when to qualify. That is too many cognitive jobs for one system. In our experience, the qualifying logic is always the first thing that gets deprioritised when the conversation gets complex, precisely the moments when capturing that information matters most.

Both approaches shared a flaw that took us a while to name clearly. They both treat qualifying as a question-generation problem. Ask enough questions in the right way, and eventually you will have the data you need.

But that is not what a great admissions advisor is doing. They are running a goal tracking system. They know exactly what they need to find out. They know what they already know. And they are choosing the right moment for every single question based on a real-time reading of the conversation. That insight is what led us to a third approach, and to the three-layer architecture we now build around.

Goal Setting and Monitoring, Routing, and Planning.

We ground our approach in established agentic design patterns, specifically three that are directly relevant to this problem.

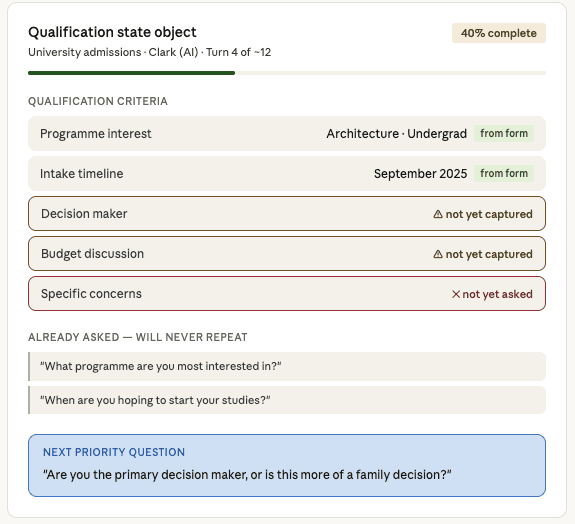

The first is Goal Setting and Monitoring. Qualifying is not open-ended. For any given institution, there is a defined set of criteria that determines whether a prospect is qualified. Programme interest, intake timeline, decision-making authority, and funding situation. These criteria do not change from conversation to conversation. What changes is the state of knowledge about each prospect.

This means the foundation of any qualifying system should be a Qualification State Object. A structured record, living across the entire conversation, that tracks which criteria have been captured, which remain missing, which were asked but unanswered, and critically, what has already been asked so the agent never repeats itself. This state object is the memory of the qualification process. Without it, every turn is stateless, and every question is improvised.

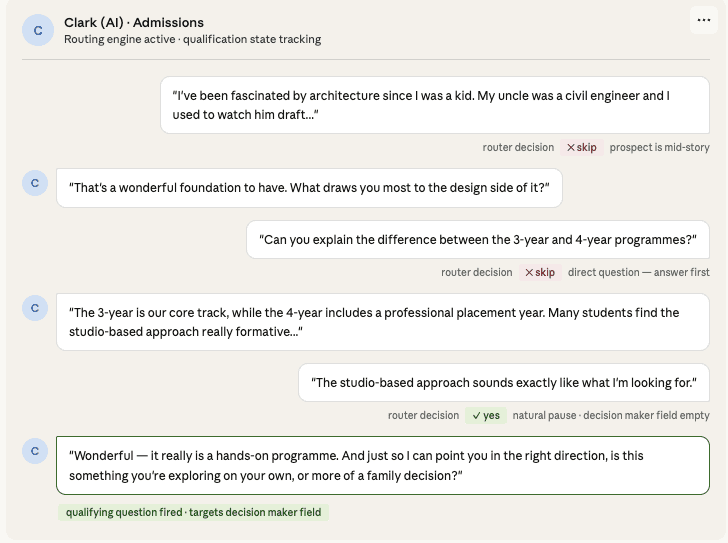

The second pattern is Routing. Instead of binary thinking, always fire or let the main agent decide, introduce a lightweight routing layer that answers one specific question before every response: is this the right moment to attempt a qualifying question?

This router is fast and inexpensive. It looks at the current qualification state, the emotional register of the conversation, how recently a qualifying question was asked, and whether the prospect has just raised something that needs a direct response first. It outputs a simple decision. Only when it says yes does the qualifying question generator fire. This gives you control without the cost of running a full language model after every turn.

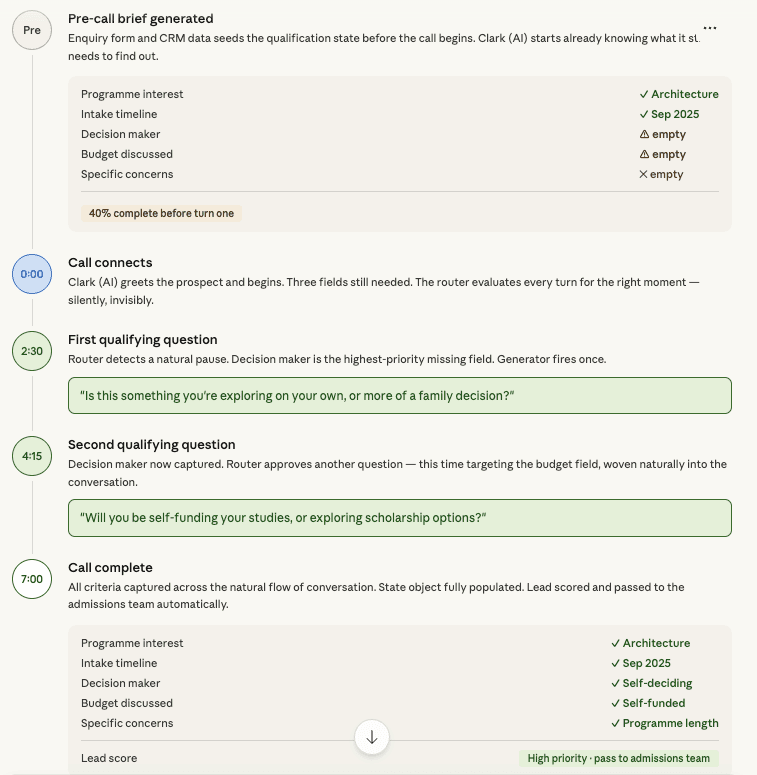

The third pattern is Planning. This is where the approach becomes sophisticated, particularly for outbound voice scenarios. Rather than generating qualifying questions reactively across a conversation, you can plan the qualification arc before the conversation begins.

If a prospect has already filled out an enquiry form, some of your qualification criteria are already met before the first message. If you know which programme they expressed interest in, which intake they selected, and which campus they indicated, those fields in the Qualification State Object can be pre-populated. The agent begins the conversation already knowing what it still needs to find out, and with a prioritised sequence for finding it out.

For outbound calls specifically, this planning step can run in the seconds before the call connects, using whatever data exists in your CRM or enquiry system. The agent is not starting from zero. It is starting from a brief.

What this looks like for your team

In practice, a conversation running on this architecture does not feel technical to anyone involved. The student experiences something that feels like talking to a person who is genuinely paying attention. The admissions advisor who picks up the qualified lead afterwards walks into a conversation with context already built: what the student cares about, what questions have already been answered, and what still needs to be explored.

What changes operationally is the scale at which your team can work. Enquiries that would previously sit unanswered overnight or over a weekend get handled intelligently, in real time, without anyone on your team needing to be available. Peak season pressure eases because the early qualification work is already done before a human advisor ever enters the conversation. And the conversations that do reach your team are warmer, more informed, and more likely to convert.

That is the practical outcome of getting the architecture right. Not a smarter chatbot. A genuine extension of your admissions capacity, built to the standard your best advisors have already set.

The questions worth answering to build superpowers for your admissions team

Every institution is different. The criteria that define a qualified prospect for a postgraduate engineering programme are not the same as those for an undergraduate business intake or a short course enquiry. The qualification logic needs to reflect your programmes, your student profile, and your team's priorities, not a generic template.

That is why the first conversation we have with any admissions team is not about technology. It is about their best advisor.

What do they ask? When do they ask it? What tells them a student is the right fit?

The architecture follows from that. Not the other way around.

Admissions is a high-stakes, relationship-sensitive process. Prospective students are making significant decisions about their futures, and they are evaluating your institution's culture and quality through every interaction, including the AI ones.

An agent that asks a tone deaf question at the wrong moment does not just fail to qualify a lead. It actively damages the relationship and the institution's brand. An agent that tracks qualification goals intelligently, routes decisions carefully, and generates questions that feel human and well-timed does the opposite. It builds trust, it gathers the information your team needs, and it creates a conversation that the prospect actually remembers positively.

That is the difference between a chatbot that generates questions and an AI system that genuinely complements your admissions team. One produces data. The other produces conversations worth having, and students who feel, from their very first interaction, that your institution understands them.

If this resonates, let us talk

We work with admissions teams across the Middle East to build AI that actually complements the way your advisors work, not just automates around them. If you are thinking about what qualifying intelligence could look like for your institution, or if you are already running something and want to understand what is missing underneath it, we would genuinely enjoy the conversation.

Reach out directly at